Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

AUTONOMOUS KILLS

SELF-DRIVING CARS programmed to kill? This is an ethical question inherent in autonomous vehicle design. And it’s a practical problem, not just a philosophical one. MIT Technology Review, October 22, 2015, describes an automaker dilemma in anticipation of such technology coming to the streets, many say by 2020.

The problem is not unrelated to a classic one of ethics offered at this website last year: “On Bilingual Trolley Accidents” asked whether an action might be ethically acceptable in a second language, but not in our native tongue. At its basis was the Trolley Dilemma, which offered the ethical option by pushing an innocent fat guy into the path of a trolley, thus saving five others further down the track.

A variation of the Trolley Dilemma. Image from Advocatus Atheist.

The automaker Self-Drive Dilemma is analogous, with the second language being the arcane one of computer programming.

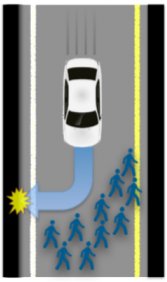

What’s more, the quandary is completely realistic: Suppose a self-driving car can avoid a group of people on the street, but only by swerving onto the sidewalk and hitting a single pedestrian. Is it ethical to sacrifice one life in the interest of saving many?

Hit a single person on the sidewalk, rather than many on the street. Is this the best programmed solution for an autonomous car? This and other images from MIT Technology Review, October 22, 2015.

Other variations trade the driver for the street-goer(s). Suppose the swerve is into a brick wall, killing the driver, but saving a single street-goer. It’s an even swap of lives, yet raises a series of ethical questions: Was the car going too fast? Was the pedestrian jay-walking?

Smash into a brick wall, killing the driver, saving a single street-goer. An altruistic swap of one for one?

What if there’s more than one person in the car killed in the brick wall impact? And, chillingly, what about the ages of the occupants? Kids likely had no say about being in the car. What’s more, they have their lives ahead of them; the elderly may have already experienced rich lives.

Should the car’s computer do estimated body counts in determining optimality? Does an ethical human driver do anything analogous? And would a person buy an autonomous vehicle that operated with such programming?

There’s more: What are the ethics of hitting a relatively defenseless motorcyclist versus another motorist enclosed in an air-bag-equipped car? What about small car versus semi? Semi versus school bus?

Two of the MIT Technology Review articles, “Why Self-Driving Cars Must be Programmed to Kill,” and “How to Help Self-Driving Cars Make Ethical Decisions,” discuss this interface of philosophy and technology. See also the abstract of a related technical paper, “Autonomous Vehicles Need Experimental Ethics: Are We Ready for Utilitarian Cars?”

Last, imagine the legal implications.

Based on reading these articles, I’d opt for keeping humans, error-prone though they may be, completely in the loop. What about you? ds

© Dennis Simanaitis, SimanaitisSays.com, 2015

I’m with you on this one Dennis. Let the human behind the wheel be in control. When Toyota had problems with the floor mats and the guy had to be slowed down by the police cruiser, why did he not drop the thing into neutral and coast to the side? My point is that people will blame the technology to cover themselves.

Here in Colorado today, our governor is test riding in a ‘driverless car.’ Do not know if 25-cent cat sensitivity is programed in.

This is the dilemma I have always thought would kill the idea of autonomous automobiles. In the U. S. of A. particularly, the lawyers will make it impractical. Whatever ethical decision you program into the car, there will be a case where someone thinks it’s wrong, and hire some high-powered law firm to jump in and tie the case up in court. Once that happens, automakers will run away from the technology like it’s radioactive. Of course, there’s always the Monsanto defense. The manufacturers could convince a majority of legislators to exempt them from liability.