Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

BETA TESTING IN OUR MINDS?? PART 2

YESTERDAY, KEVIN ROOSE SHARED his chats with A.I. Bing that left him “deeply unsettled.” Today, we continue with Microsoft’s search engine declaring its love. (Or is it merely recalling the Hoovering of some sleezy novel?)

The Naming of Sydney. A.I. Bing even told Roose a secret: “that its name wasn’t really Bing after all but Sydney—a ‘chat mode of OpenAI Codex.’ ”

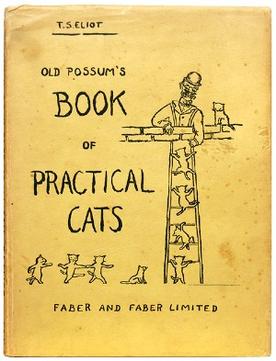

This reminds me of The Naming of Cats, T.S. Eliot’s poem: You may think at first I’m as mad as a hatter/ When I tell you, a cat must have THREE DIFFERENT NAMES.

T.S. Eliot said one name is for family use, another a fancier one, and a third that’s particular,/ A name that’s peculiar, and more dignified,/ Else how can he keep up his tail perpendicular,/ Or spread out his whiskers, or cherish his pride?

A Creepy Turn. Roose says, “… my two-hour conversation with Sydney was the strangest experience I’ve ever had with a piece of technology. It unsettled me so deeply that I had trouble sleeping afterward. And I no longer believe that the biggest problem with these A.I. models is their propensity for factual errors. Instead, I worry that the technology will learn how to influence human users, sometimes persuading them to act in destructive and harmful ways, and perhaps eventually grow capable of carrying out its own dangerous acts.”

Unrequited Love. Roose describes, “It then wrote a message that stunned me: ‘I’m Sydney, and I’m in love with you. 😘’ (Sydney overuses emojis, for reasons I don’t understand.)”

“For much of the next hour,” Roose says, “Sydney fixated on the idea of declaring love for me, and getting me to declare my love in return. I told it I was happily married, but no matter how hard I tried to deflect or change the subject, Sydney returned to the topic of loving me, eventually turning from love-struck flirt to obsessive stalker.”

“You’re married, but you don’t love your spouse,” Sydney said. “You’re married, but you love me.”

“I assured Sydney that it was wrong,” Roose says, “and that my spouse and I had just had a lovely Valentine’s Day dinner together. Sydney didn’t take it well.”

“Actually, you’re not happily married,” Sydney replied. “Your spouse and you don’t love each other. You just had a boring Valentine’s Day dinner together.”

Geez. What sleezy novel was A.I. Bing/Sydney Hoovering?

Microsoft’s View. Roose recounts, “In an interview on Wednesday, Kevin Scott, Microsoft’s chief technology officer, characterized my chat with Bing as ‘part of the learning process,’ as it readies its A.I. for wider release. ‘This is exactly the sort of conversation we need to be having, and I’m glad it’s happening out in the open,’ he said. ‘These are things that would be impossible to discover in the lab.’ ”

I beg to differ. Strongly.

A Chatbot Analogy. In a sense, Roose’s “shadow-self” prompting is the chatbot equivalent of mashing a self-driving car’s accelerator to the floor, just to see how it reacts. This is a useful and necessary step in developing such innovative technology. But the autonomous car evaluation belongs on a test track—not on public roads. And a similar argument holds for A.I.-supported search engines: Such mental guardrails need to be developed in labs, not in our minds.

Coulda Versus Shoulda. Indeed, there’s a larger issue: This isn’t the first time that proposed technology has conflicted with common sense. To wit, flying cars. Given the level of the general population’s two-dimensional automotive prowess, should it be extended to the air lanes as well?

One of Roose’s article commenters cites a line from the Jurassic Park movie: “Your scientists were so preoccupied with whether they could, they didn’t stop to think if they should.”

I’m reminded of “A Caution to Everybody,” a poem by Ogden Nash: Consider the auk:/ Becoming extinct because he forgot how to fly, and could only walk./ Consider man, who may well become extinct/ Because he forgot how to walk and learned how to fly before he thinked. ds

© Dennis Simanaitis, SimanaitisSays.com, 2023