Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

WORKING THE INTERNET’S EDGE WITH ANALOG OPTICS

WE LIVE IN DIGITAL TIMES. Yet, as noted recently here in SimanaitisSays, analog computing is having a resurgence.

“Delocalized Photonic Deep Learning on the Internet’s Edge,” by Alexander Sludds et al., Science, October 20, 2022, offers an example of this, albeit with adjectives and nouns begging for definition to the benefit of us non-specialists. Here are tidbits on this tantalizing research.

Background. Deep Learning uses artificial neural networks to mimic the learning process of the human brain. It identifies inferences (loosely, if/then relationships) by analyzing vast amounts of data.

This deep learning is photonic if it employs photons not electrons; that is, optical fibers in lieu of electrical wires. A benefit of photonics is its support of higher data rates, of potentially more machine learning in a given nanosecond.

Delocalizing this learning splits the process into tasks capable of being performed by a multiplicity of less powerful devices, even by devices as ubiquitous as cell phones.

Learning on the Edge. The researchers note, “Smart devices such as cell phones and sensors are low-power electronics operating on the edge of the internet. Although they are increasingly more powerful, they cannot perform complex machine learning tasks locally. Instead, such devices offload these tasks to the cloud, where they are performed by factory-sized servers in data centers, creating issues related to large power consumption, latency, and data privacy.”

They offer an alternative to this: “We introduce an approach to machine learning inference based on delocalized analog processing across networks. In this approach, named Netcast, cloud-based ‘smart transceivers’ stream weight data to edge devices, enabling ultraefficient photonic inference.”

Linear Algebra’s Matrices. The computational demands of deep learning rely on the linear algebra of matrices, of rectangular arrays of values. Ryan Hamerly offers details in “The Future of Deep Learning is Photonic,” IEEE Spectrum, June 29, 2021.

Modern computer hardware, Hamerly notes, “has been very well optimized for matrix operations…. The relevant matrix calculations for deep learning boil down to a large number of multipy-and-accumulate operations, whereby pairs of numbers are multiplied together and their products added up.”

Digital Overwork ⇒ Analog Photonics. However, with advances of deep learning comes vast increases in the number of these operations. “The usual solution,” Hamerly notes, “is simply to throw more computing resources—along with time, money, and energy—at the problem.”

“As a result,” Hamerly notes, “training today’s large neural networks often has a significant environmental footprint. One 2019 study found, for example, that training a certain deep neural network for natural-language processing produced five times the CO2 emissions typically associated with driving an automobile over its lifetime.”

Succinctly, Moore’s Law (“Transistor density doubles every two years”) is “running out of steam.” And analog photonics is a promising alternative.

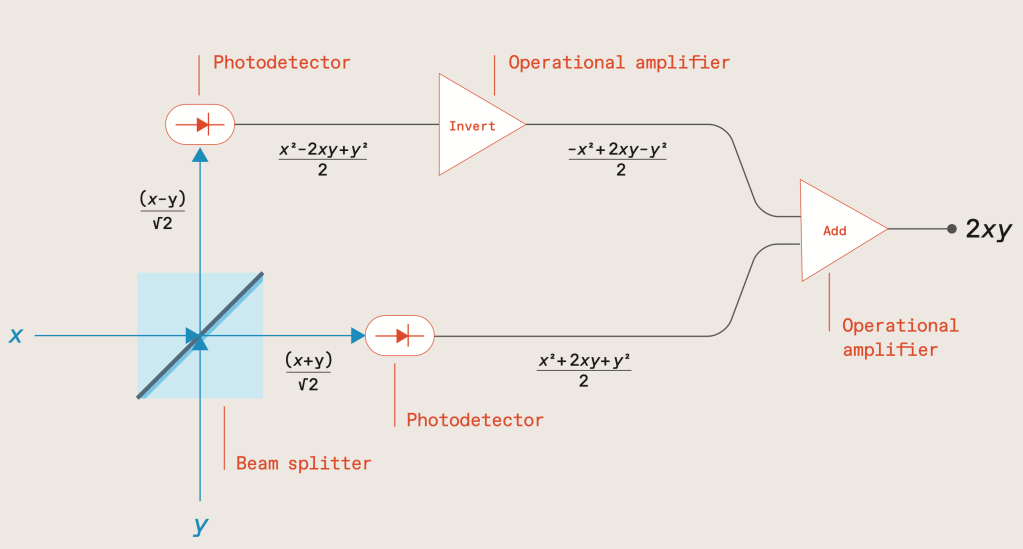

Multiplying with Light. Hamerly describes how photonic multiplication operates: “Two beams whose electric fields are proportional to the numbers to be multiplied, x and y, impinge on a beam splitter (blue square). The beams leaving the beam splitter shine on photodetectors (ovals), which provide electrical signals proportional to these electric fields squared. Inverting one photodetector signal and adding it to the other then results in a signal proportional to the product of the two inputs.”

And Exceedingly Quickly. What with photons being more efficient than electrons, Sludds and his colleagues report that “Netcast allows milliwatt-class edge devices with minimal memory and process to compute at teraFLOPS rates reserved for high-power (>100 watts) cloud computers.”

Their edge computing architecture “makes use of the strengths of photonics and electronics to achieve orders of magnitude in energy efficiency and optical sensitivity improvements over existing digital electronics.”

Quite an achievement. And the rest of us get to learn a new way of multiplying optically. ds

© Dennis Simanaitis, SimanaitisSays.com, 2022

Related

Information

This entry was posted on November 21, 2022 by simanaitissays in Sci-Tech and tagged "Delocalized Photonic Deep Learning on the Internet's Edge" A. Sludds et al (MIT Nokia), analog photonics (in neural network computing), deep learning (artificial neural networks mimic brain), linear algebra matrix multiplication, multiplying with light (beam splitter photo detector inverter addition), photonics: photons not electrons (optical data rate higher than wire).Shortlink

https://wp.me/p2ETap-f5CCategories

Recent Posts

Archives

- June 2026

- May 2026

- April 2026

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012