Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

I’VE GOT A SONG IN MY HEAD—BUT WHERE?

DIFFERENT REGIONS OF the human brain are occupied with different processes. Its right hemisphere controls the left side of the body, and is the more artistic and creative hemisphere; the left hemisphere controls the body’s right side and is more academic and logical.

That is, our music appreciation is a right-brain function; our language skills reside on the left.

So, I’ve got this song in my head, both lyrics and melody. Where does it reside?

Science, February 28, 2020, addresses this in Daniela Sammler’s “Splitting Speech and Music.” “How do listeners extract words and melodies from a single sound wave?,” she asks.

Her article and an associated technical paper by Albouy et al. offer “evidence for the biophysical basis of the long-debated, yet still unresolved, hemispherical asymmetry of speech and music perception in humans. They show that the left and right auditory regions of the brain contribute differently to the decoding of words and melodies in song.”

Methodology as a Paradigm of Science. In “Distinct Sensitivity to Spectrotemporal Modulation Supports Brain Asymmetry for Speech and Melody,” Albouy and his colleagues give a fine example of the scientific approach: recognizing existing knowledge, positing advancement of the theory, devising means of analysis, then testing whether the expanded theory is confirmed.

Background. Sammler describes how, in 1861, French anatomist Pierre Paul Broca “astounded his Parisian colleagues with the observation that speech abilities are perturbed after lesions in the left, but not right, brain hemisphere. This seminal insight not only ended the long-held view of symmetrical brain organization; it also launched the quest for asymmetries in other cognitive domains.”

Previous research has suggested that speech perception depends strongly, though not solely, on short-lived temporal modulations: for example, the “b” and “p” sound in differentiating “bear” from “pear.”

By contrast, music perception requires processing a detailed spectral composition of sounds: of frequency modulation, not short-lived temporal modulation.

Separating the Two Perceptions. Albouy and his colleagues began with recordings of 100 carefully constructed unaccompanied vocals. To these, they applied special filters that incrementally removed either temporal details or spectral details of the song.

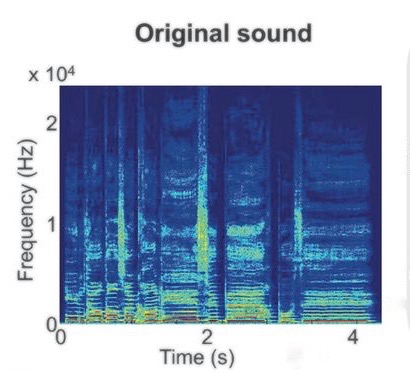

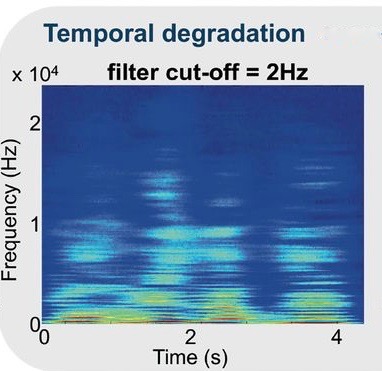

Above, an unfiltered vocal. Immediately below, the vocal having passed through a 1.8-cyc/kHz filter; below it, the vocal having passed through a 2-Hz temporal filter. These and the following images from Albouy et al.

Participants listened to arrays of 100 vocals and their filtered versions.

An example of the test methodology. English-speaking participants showed different, but not dissimilar, results.

The brains of listeners were monitored by functional magnetic imaging. The resulting data were fed to a machine-learning program that identified patterns of brain activity that corollated with the words or melodies being heard by participants.

Sammler notes, “… the authors established that the stepwise removal of temporal detail impairs recognition of the words (but not recognition of the melodies), whereas the degradation of spectral detail weakens recogniton of the melodies (but not recognition of the words).”

Researchers say there’s not an absolute split of one and the other. For example, as Sammler observes, “… musicians could never play in sync without perceived subtle rhythmic deviations,” which, of course, are temporal. Rather, as other research has suggested, there’s a weighted distribution of left and right auditory regions to different aspects in both domains.

Have I ever told you that Amazing Grace and The Mickey Mouse Song have interchangeable melodies? And what does this tell you about my brain? ds

© Dennis Simanaitis, SimanaitisSays.com, 2020

So are earworms right brain or left brain?

Perhaps more research is indicated.

Thank you for this article! My research partner and I were assigned this paper for a presentation and had a difficult time deciphering the figures (:

Me too, Kaitlyn. (Just curious, are you related to an old friend of mine?)

I hope you found all this research interesting, just as I did, even with its deciphering challenges.