Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

PLATO, THE INTERNET, EDUCATION, AND A.I.

“WHAT WOULD PLATO SAY about ChatGPT?” asks Zeynep Tufekci, a young lady with more than just an interesting name: Dr. Tufekci is a sociologist, a professor at Columbia University’s Craig Newmark Center for Journalism Ethics and Security and a faculty associate at the Berkman Klein Center for Internet and Society at Harvard University. She’s also a columnist for The New York Times, where she posed this question as an Opinion Editorial, December 15, 2022.

Here are tidbits on her thoughtful article touching on A.I., Plato, the Internet, and education.

This Latest A.I. Tufekci explains, “ChatGPT, a conversational artificial intelligence program released recently by OpenAI, isn’t just another entry in the artificial intelligence hype cycle. It’s a significant advancement that can produce articles in response to open-ended questions that are comparable to good high school essays.”

Whence Its Smarts? ChatGPT is another example of machine-learning A.I. It is fed scads of stuff from the IoE, the Internet of Everything, enough so that its learning algorithm can come up with seemingly intelligent responses to queries about this and that. It does so by identifying probabilistic connections between words, zillions of them.

Not to disparage machine learning. Humans accumulate knowledge in a similar way: We infuse scads of stuff from reality. At first, this had nothing to do with reading. Poems like The Odyssey likely had oral performances. Homer wasn’t a writer; he was a story teller.

Plato’s Plight. Tufekci writes, “Plato mourned the invention of the alphabet, worried that the use of text would threaten traditional memory-based arts of rhetoric. In his ‘Dialogues,’ arguing through the voice of Thamus, the Egyptian king of the gods, Plato claimed the use of this more modern technology would create ‘forgetfulness in the learners’ souls, because they will not use their memories,’ that it would impart ‘not truth but only the semblance of truth’ and that those who adopt it would ‘appear to be omniscient and will generally know nothing,’ with ‘the show of wisdom without the reality.’ ”

Plato’s Error. “Plato,” Tufekci says, “erred by thinking that memory itself is a goal, rather than a means for people to have facts at their call so they can make better analyses and arguments…. As Plato was wrong to fear the written word as the enemy, we would be wrong to think we should resist a process that allows us to gather information more easily.”

So why not just ask ChatGPT?

Tufekci notes, “… ChatGPT sometimes gave highly plausible answers that were flat-out wrong—something that its creators warn about in their disclaimers.”

Our Challenge. Recall from “A.I. Learns the Diplomacy Game,” one of the researchers noted that A.I. CICERO’s responses in the game were occasionally “saying lots of crap.”

Tufekci says, “Unless you already knew the answer or were an expert in the field, you could be subjected to a high-quality snow job.”

To recall the old computer adage, GIGO: Garbage in, garbage out. That is, humans still need reasoning skills to assess A.I.’s reflecting on its vast acquired knowledge.

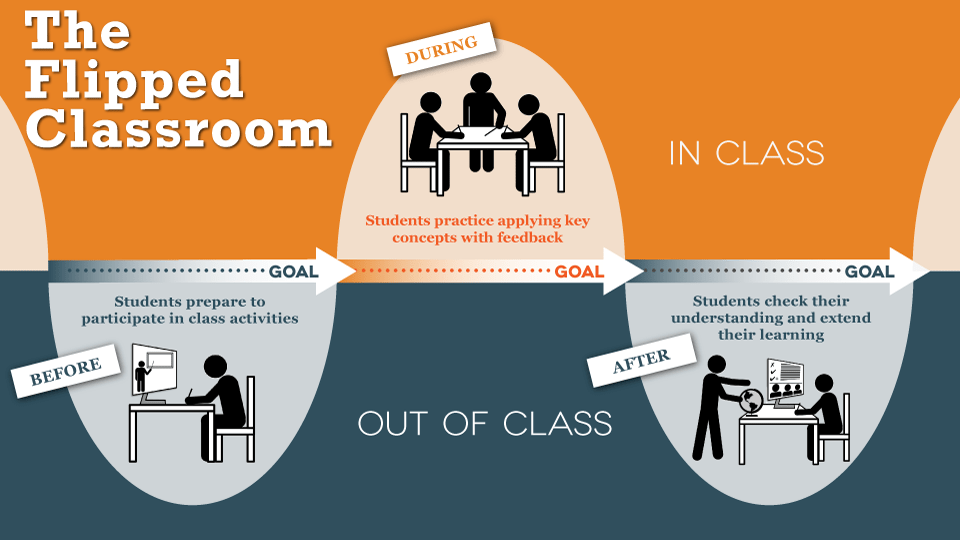

Flipping the Classroom. Tufekci describes a new pedagogy that hones these reasoning skills: “Rather than listen to a lecture in class and then go home to research and write an essay, students listen to recorded lectures and do research at home, then write essays in class, with supervision, even collaboration with peers and teachers. This approach is called flipping the classroom.”

“In flipped classrooms,” Tufekci says, “students wouldn’t use ChatGPT to conjure up a whole essay. Instead, they’d use it as a tool to generate critically examined building blocks of essays.”

She continues, “Teachers could assign a complicated topic and allow students to use such tools as part of their research. Assessing the veracity and reliability of these A.I.-generated notes and using them to create an essay would be done in the classroom, with guidance and instruction from teachers. The goal would be to increase the quality and the complexity of the argument.”

A Way Forward. Tufekci says, “The way forward is not to just lament supplanted skills, as Plato did, but also to recognize that as more complex skills become essential, our society must equitably educate people to develop them. And then it always goes back to the basics. Value people as people, not just as bundles of skills.”

She concludes with, “And that isn’t something ChatGPT can tell us how to do.” ds

© Dennis Simanaitis, SimanaitisSays.com, 2022

Dennis,

A excellent cut on Tufecki’s column! These technologies are fantastic new tools for people. (But too many will abuse the tools and their readers as well. Not a lot has changed here even though everything is changing in the longer term.) We have to learn how to better adapt, exploit and critically judge what we read and what other people say. We’re not very good at that.

>

Thank you for your kind words, Tom. I thought Tufekci’s article was particularly thoughtful. I liked the idea of the flipped classroom.

When mentoring younger engineers I would ask them to attack some problems using basic hand methods because their instinct even on simple stuff was to use the high end computer software for everything. The manual approach would give them a better appreciation of what was going on. In one instance one of them had ridiculously large structural steel sections on a relatively simple module structure (that’s what the computer told them). By doing a quick manual calculation I was able to determine that their problem was the geometry that they had laid out. In essence the computer had given them the right answer to the wrong question (variation on GIGO).

Use of any new tools can be really helpful. The high power structural software that we have these days is necessary to meet building code requirements, but a basic understanding of what you are doing is essential to utilizing the software properly.

One of the major programs, utilized by many engineers worldwide, introduced an error in (at the time) the latest version. Results just did not make any sense to me. I communicated my concerns to the developer and about a week later they had another update that fixed the problem. Many inexperienced engineers might have taken the flawed results as correct.