Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

INFINITESIMAL CHIPS OFF THE OLD BLOCK: HAPPY BIRTHDAY, TRANSISTOR! PART 2

YESTERDAY, WE BEGAN CELEBRATING the transistor’s 75th birthday with a book review of Chip War: The Fight for the World’s Most Critical Technology. Today in Part 2, the American Association for the Advancement of Science joins the celebration with a cover story in Science, November 18, 2022.

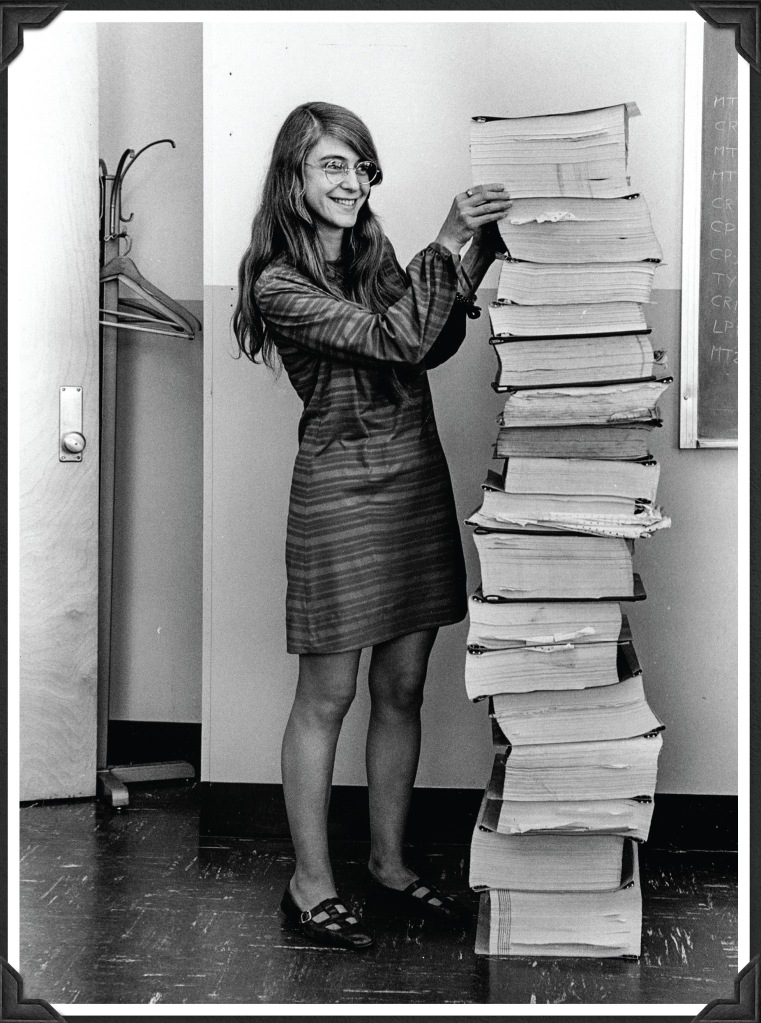

In “From One Transistor…”, Science, November 18, 2022, Phil Szuromi provides an introduction: “The reach of transistors is difficult to overestimate. Transistors not only transformed communications but also made computers widely available. In science and technology, high-power electronics enable gel electrophoresis of DNA and extensive computational and software resources enable genomic sequencing. The transistor-based Apollo mission guidance computers executed complex control tasks in real time, with the help of reliable software to operate them.”

Topics addressed in the Science Special Section include “Moore’s Law: The Journey Ahead,” three other technical articles, and a powerful Editorial.

Moore’s Law. Mark S. Lundstrom and Muhammad A. Alam write, “Although Moore’s law predicted a rate for the decrease in cost per transistor, it is popularly viewed in terms of transistor size, which for two-dimensional (2D) chip arrays translates into an areal size or ‘footprint.’ During the last 75 years, as the footprint has decreased from micrometer to nanometer scales, issues with implementing new fabrication technologies have raised concerns several times about the ‘end of Moore’s Law.’ ”

“Twenty years ago,” Lundstrom and Alam write, “ a pessimistic outlook prevailed regarding the development of several difficult technologies for scaling to continue. In this context, one of the authors (M.S.L.) predicted that instead of slowing down, the scaling of metal-oxide-semiconductor field-effect transistors (MOSFETs) below the so-called 65-nm node, which was state-of-the-art in 2003, would continue unabated for at least a decade before the scaling limit was reached.”

They continue, “Scaling indeed continued from about 100 million transistors per chip in 2003 to as many as 100 billion transistors per chip today.”

Three Platforms. Lundstrom and Alam say, “Two-dimensional (2D) nanoelectonics, three-dimensional (3D) terascale integration, and functional integration can all extend Moore’s law, but all face substantial challenges and fundamental limit.”

A Summary: Lundstrom and Alam conclude, “Shifting from nanoelectronics (focused on reducing the transistor dimension) to terascale electronics (driven by increasing transistor count and related functionality) defines the paradigm shift and core research challenges of the future.

What’s Needed. It will require fundamental advances in materials, devices, processing, and the design and manufacture of the most complex systems humans have ever built. Someday the electrical tunneling and thermal bottleneck will define the limits of 3D integration. Until then, Moore’s law will likely continue as researchers address the challenges of these extraordinarily complex electronic systems.”

A Shockley Editorial. In a Science Editorial, November 18, 2022, Editor-in-Chief H. Holden Thorp minces no words concerning transistor co-inventor William Shockley: “Shockley was a Racist and Eugenicist.”

In 1956, Shockley shared the Nobel Prize in Physics with John Bardeen and Walter Brattain. However, Thorp observes, “Appallingly, Shockley devoted the latter part of his life to promoting racist views, arguing that higher IQs among Blacks were correlated with higher extents of Caucasian ancestry, and advocating for voluntary sterilization of Black women.”

“At the time,” Thorp writes, “Science did not condemn Shockley for what he was: a charlatan who used his scientific credentials to advance racist ideology.”

“The lesson,” Thorp says, “is that we at Science need to make more effort to think about everything that we do, not only from the standpoint of communicating science to the public, but also as an organization that above all supports all of humanity.”

Conclusion: Thorp notes that as recently as 2001, Science described Shockley “simply as ‘a transistor inventor and race theorist.’ That won’t cut it anymore. As of today, a link to this editorial will appear along with any mention of Shockley in this journal.”

“The process of science is one of continual revision,” Thorp says, “but it’s also one that must have a conscience.” ds

© Dennis Simanaitis, SimanaitisSays.com, 2022

Wow. Of course, I learned about Shockley the scientist while getting my EE degree. I’m shokleyed to learn about his evil side. I had no idea about that until today. It is said we should never meet our heroes. What a despicable legacy.

I always wonder, does someone like that push so hard for such horrible ideas because he (and it is usually a “he”) stole his idea or part of his idea from the group he is trying to suppress?

I also wonder how many (not “if”) black engineers either were dissuaded from the field or blacklisted (no pun) thanks to Shockley? What a loss to humanity.

Jack Albrecht says: “[…] he (and it is usually a “he”) stole his idea or part of his idea from the group he is trying to suppress. […]”

Sometimes it’s two of ’em, Jack, like Nobelists, Crick and Watson who, when handed to them on a plagiarizing-plate by her boss, and unbeknownst to her, used Rosalind Franklin’s crystallography to finally fathom the double helix of DNA, and one of them admitted that Rosalind herself was only a few days away from cracking the double helix! No Nobel Prize for Rosalind, who wasn’t even allowed to dine with the males in their mess. I wonder if Crick and Watson famously..err..famishly feared that Rosalind might somehow eat into the profite_roles had she deservedly got a (wo)mention? Tsk.

The chart of 2D-3D-functional integration … the description of “functional integration” looks a lot like what we called “smart client-server” in the 1970s-80s, after PCs (even before IBM made them a Big Business thing) made offloading processing from the mainframe or big mini feasible. It’s pretty much how nearly all software is designed, separating the edge components (usually a user interface of some kind) from the back-end (services). There is processing at all levels, often to reduce the amount of data needing to be transmitted; there are many layers, in both hardware and software. So that in itself is not a new concept. New and performant ways to use it, like AI that works, are where progress is found. Improvements in hardware (the 2D-3D stuff), including on-chip networking, enable that.

To put this into perspective, when I was in Engineering school in the early 70s, we had an IBM 1130 computer with 8 K words (so I think 16 K bytes) of memory. With punch card reader, console, CPU, and a line printer, this machine occupied a room (probably about 225 sq ft). Now I wear a watch with 32GB of memory, a scale of about 131,000 times in the memory, and I don’t even want to do the size scale calculation.