Simanaitis Says

On cars, old, new and future; science & technology; vintage airplanes, computer flight simulation of them; Sherlockiana; our English language; travel; and other stuff

A.I.’S PLACE, HUMAN EFFORT, AND FRICTION-MAXXING PART 1

HUMANITY IS AT A CUSP OF INTELLECT, tantamount to moveable type’s effect on sharing thought, radio and television’s effect on communicating it, and the computer’s effect on amassing it. Today, artificial intelligence may be finessing humans out of the process.

Is this inevitable? Beneficial? Detrimental? Or, perhaps most important, controllable?

Several articles are ripe for gleaning tidbits which follow here in Parts 1 and 2 today and tomorrow.

The Scaling of A.I. Donald MacKenzie has appeared here at SimanaitisSays before: See “Lasers Over Mahwah,” November 16, 2014; “Making (Or Losing) Zillions At (Almost) The Speed of Light,” March 21, 2019; and “Googling—For Fun and Profit,” November 29, 2025. Today, MacKenzie addresses “AI’s Scale” in London Review of Books, February 5, 2026. He observes, “The imperative to increase scale is deeply embedded in the culture of AI.”

This imperative, I suspect, is partly technical—and partly corporate greed.

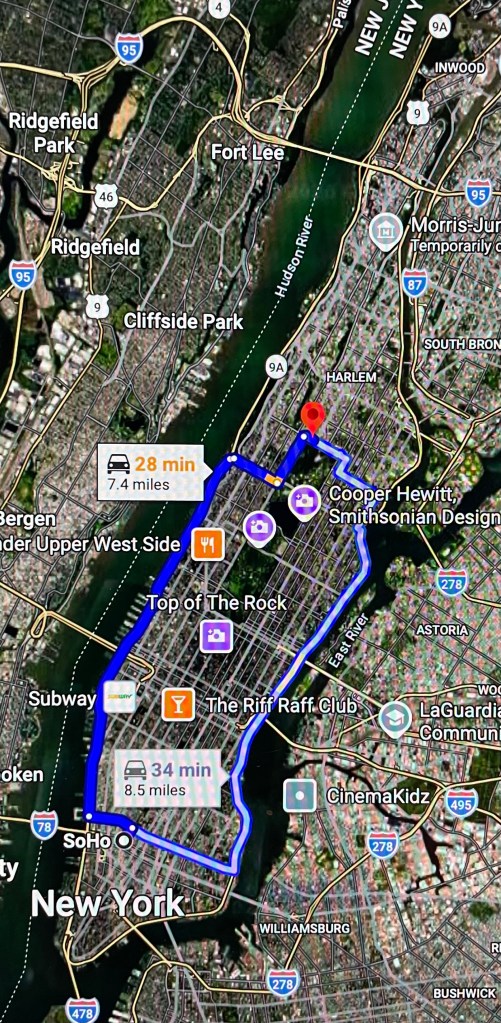

MacKenzie cites, “Hyperion is the name that Meta has chosen for a huge AI data centre it is building in Louisiana. In July, a striking image circulated on social media of Hyperion’s footprint superimposed on an aerial view of Manhattan. It covered a huge expanse of the island, from the East River to the Hudson, from Soho to the uptown edge of Central Park.” That is, half the area of Manhattan devoted to a single facility.

Image from Google Maps.

In another comparison, MacKenzie notes, “In August, researchers for Morgan Stanley estimated that $2.9 trillion will be spent globally on data centres between 2025 and 2028, while Citigroup has estimated total AI investment globally of $7.8 trillion between 2025 and 2030. (For comparison, the US defence budget is currently around $1 trillion per year.)”

And in another, he recounts, “The graphics chips and data centres on which ‘arbitrary amounts’ are being spent require huge quantities of electricity to power them. Some of this is coming from renewable sources, but much of it involves burning natural gas or sometimes even coal. Just one of the many new gas-fired power plants that are being constructed in the U.S. to meet the growing demands of data centres is on the site of an old coal-fired power station near Homer City, Pennsylvania. When it is up and running it will generate 4.4 gigawatts, just a little more than the peak winter electricity demand for the whole of Scotland.”

Environmental Concerns. MacKenzie cites, “The International Energy Agency reckons that if the current global expansion of data centres continues, the CO2 emissions for which they are responsible, currently around 200 million tonnes per year, will be about 60 per cent higher by 2030.”

“In a rational world,” MacKenzie posits, “new AI data centres would be built only where ample renewable electricity is available to power them. But in a reckless race along the diminishing-returns curve, whatever fuel is immediately available will tend to get used. In the US, that still mostly means natural gas; in China, it’s coal.”

Hardly the idyllic setting for upscaling A.I.

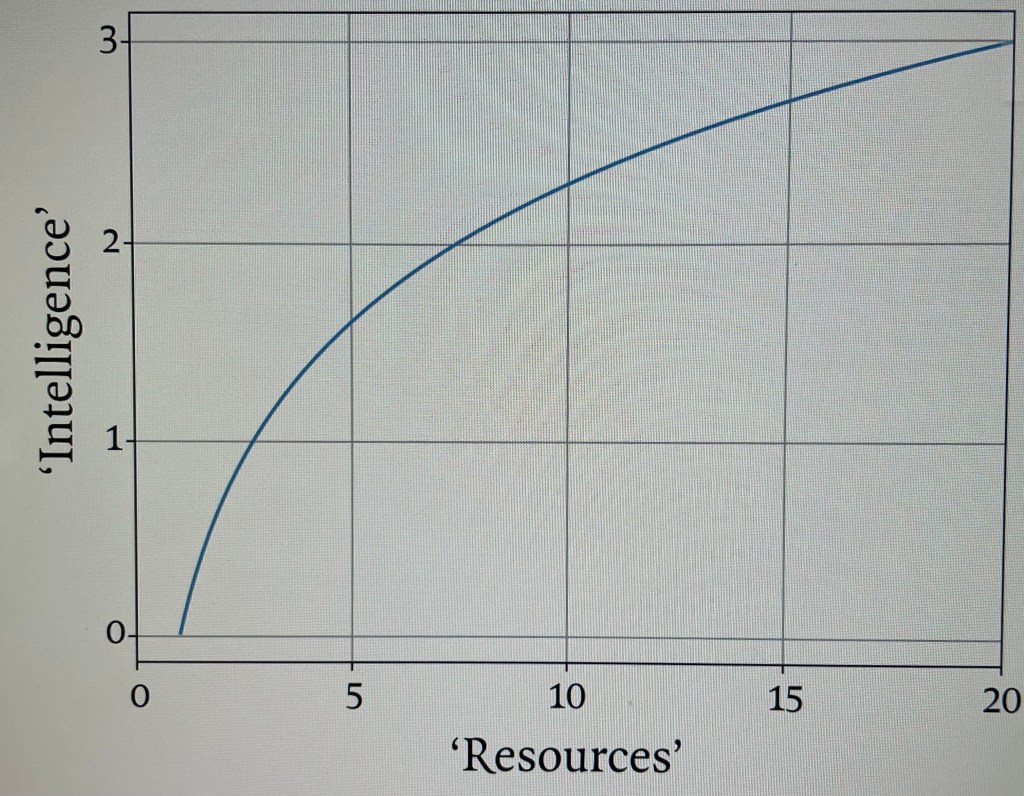

Bigger May Be Better—But By How Much?

“The more resources you put in,” MacKenzie writes, “the better the results, but the rate of improvement steadily diminishes.” He cites a post from a pro-upscaling Sam Altman that “the intelligence of an AI model roughly equals the log of the resources used to train and run it.” That is, it’s not a linear function, but a logarithmic one.

A familiar logarithmic curve. Image from LRB.

And Whence the Data? Recall that the intelligence of a Large Language Model comes from scraping the Internet for data, then performing probabilistic “next word” algorithms. MacKenzie observes that there’s already a constraint on human-produced data; he cites OpenAI co-founder Ilya Sutskever’s observation that “we have but one internet … data is the fossil fuel of AI.”

And to feed the process A.I.-generated data only diminish probabilistic accuracy by virtue of less-than-reliable input.

The Maltese Quandary. MacKenzie recounts, “Another researcher I have spoken to, Lonneke van der Plas, who is a specialist in natural language processing, implicitly warns of the risk of training AI models on computer-generated data. Among the languages on which she works is Maltese, which has only around half a million native speakers. Much digitally available Maltese, she tells me, is low-quality machine translation. In consequence, she says, if you go all out for scale in developing a model of Maltese ‘you get a much worse system than if you carefully select the data’ and exclude the reams of poor-quality text.”

Recall GIGO: Garbage In, Garbage Out.

Tomorrow in Part 2, we’ll discuss two means of controlling this quandary: the 30% A.I. Rule and Friction-Maxxing. ds

© Dennis Simanaitis, SimanaitisSays.com, 2026

Related

One comment on “A.I.’S PLACE, HUMAN EFFORT, AND FRICTION-MAXXING PART 1”

Leave a comment Cancel reply

This site uses Akismet to reduce spam. Learn how your comment data is processed.

Information

This entry was posted on March 14, 2026 by simanaitissays in Sci-Tech and tagged "A.I. Scale" Donald MacKenzie "London Review of Books", increasing scale is deeply embedded in A.I. culture, Meta's huge Hyperion centre in Louisiana (half the size of Manhattan), A.I. data centres budget $7.8 trillion '25-'30; $2.9 trillion '25-'28, energy for data centre: 4.4 gigawatts (winter demand for Scotland), IEA = International Energy Agency (predicts CO2 60% higher by 2030), "rational world: renewable energy for A.I. (in fact natural gas and coal), logarithmic curve: diminishing returns of intelligence with resources, Maltese Quandary: input data of LLM is machine-based (and hence error-rich).Shortlink

https://wp.me/p2ETap-l9ZCategories

Recent Posts

Archives

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

Y’know ;

Back in the 60’s & early 70’s I used to watch those crappy C – grade Sci-Fi flicks on the grainy black & white T.V. .

Not too scary but these days I’m beginning to wonder if someone was paying attention to all those outlandish plots .

I remember one where a computer (AI) became self aware and some how impregnated a woman then locker her inside the fully computerized house until she gave birth to a baby who’s mind was that of the computer , it ended as the baby opened it’s eyes and said “I. AM. ALIVE.” .

Pretty corny right ? .

Maybe not in 2026 .

-Nate